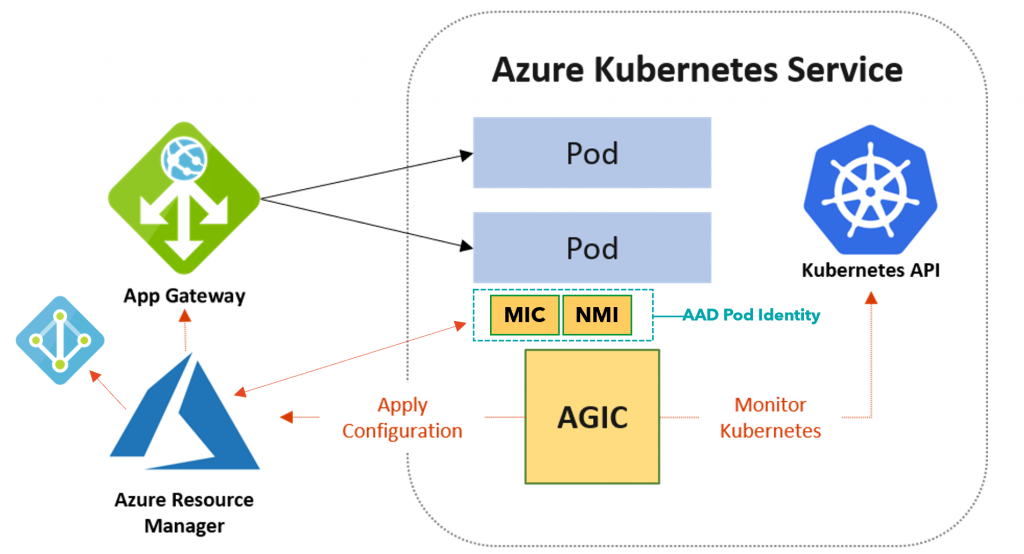

In Kubernetes, you have a container or containers running as a pod. In front of the pods, you have something known as a service. Services are simply an abstraction that defines a logical set of pods and how to access them. As pods move around the service that defines the pods it is bound to keeps track of what nodes the pods are running on. For external access to services, there is typically an Ingress controller that allows access from outside of the Kubernetes cluster to a service. An ingress defines the rules for inbound connections.

Microsoft has had an Application Gateway Ingress Controller for Azure Kubernetes Service AKS in public preview for some time and recently released for GA. The Application Gateway Ingress Controller (AGIC) monitors the Kubernetes cluster for ingress resources and makes changes to the specified Application Gateway to allow inbound connections.

This allows you to leverage the Application Gateway service in Azure as the entry into your AKS cluster. In addition to utilizing the Application Gateway standard set of functionality, the AGIC uses the Application Gateway Web Application Firewall (WAF). In fact, that is the only version of the Application Gateway that is supported by the AGIC. The great thing about this is that you can put Application Gateways WAF protection in front of your applications that are running on AKS.

This blog post is not a detailed deep dive into AGIC. To learn more about AGIC visit this link: https://azure.github.io/application-gateway-kubernetes-ingress. In this blog post, I want to share a script I built that deploys the AGIC. There are many steps to deploying the AGIC and I figured this is something folks will need to deploy over and over so it makes sense to make it a little easier to do. You won’t have to worry about creating a managed identity, getting various id’s, downloading and updating YAML files, or installing helm charts. Also, this script will be useful if you are not familiar with sed and helm commands. It combines PowerShell, AZ CLI, sed, and helm code. I have already used this script about 10 times myself to deploy the AGIC and boy has it saved me time. I thought it would be useful to someone out there and wanted to share it.

You can download the script here: https://github.com/Buchatech/Application-Gateway-Ingress-Controller-Deployment-Script

I typically deploy RBAC enabled AKS clusters so this script is set up to work with an RBAC enabled AKS cluster. If you are deploying AGIC for a non-RBAC AKS cluster be sure to view the notes in the script and adjust a couple of lines of code to make it non-RBAC ready. Also note this AGIC script is focused on brownfield deployments so before running the script there are some components you should already have deployed. These components are:

- VNet and 2 Subnets (one for your AKS cluster and one for the App Gateway)

- AKS Cluster

- Public IP

- Application Gateway

The script will deploy and do the following:

- Deploys the AAD Pod Identity.

- Creates the Managed Identity used by the AAD Pod Identity.

- Gives the Managed Identity Contributor access to Application Gateway.

- Gives the Managed Identity Reader access to the resource group that hosts the Application Gateway.

- Downloads and renames the sample-helm-config.yaml file to helm-agic-config.yaml.

- Updates the helm-agic-config.yaml with environment variables and sets RBAC enabled to true using Sed.

- Adds the Application Gateway ingress helm chart repo and updates the repo on your AKS cluster.

- Installs the AGIC pod using a helm chart and environment variables in the helm-agic-config.yaml file.

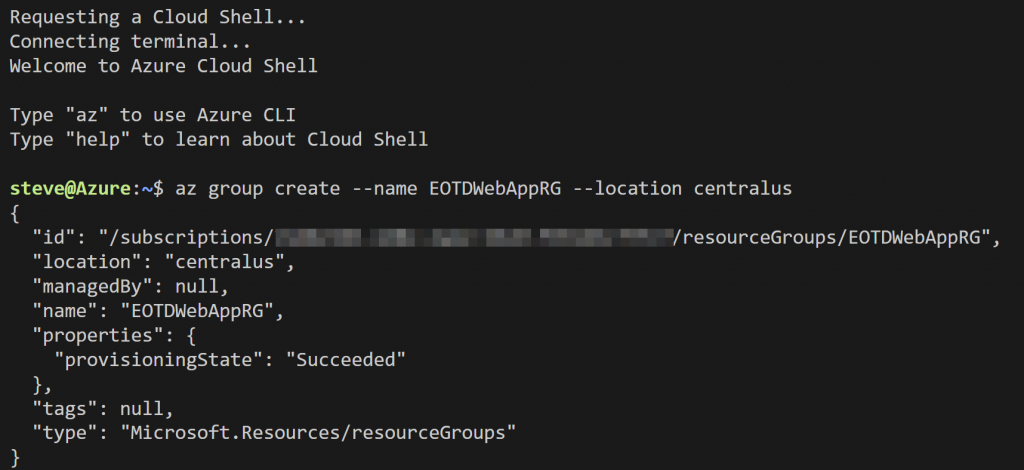

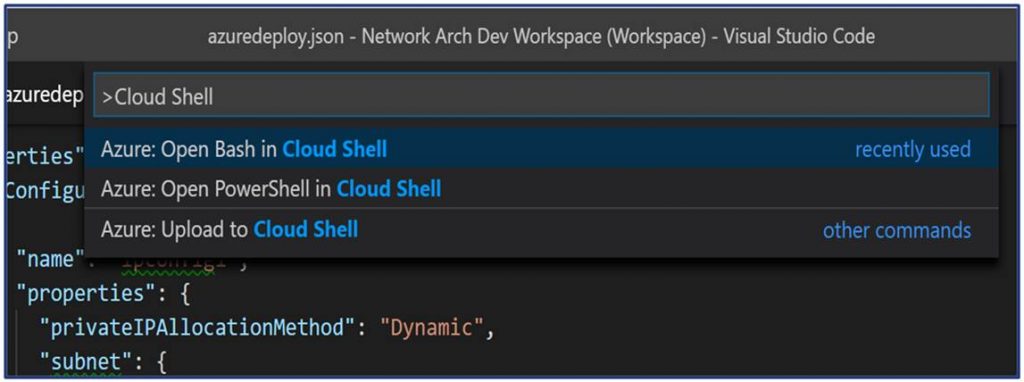

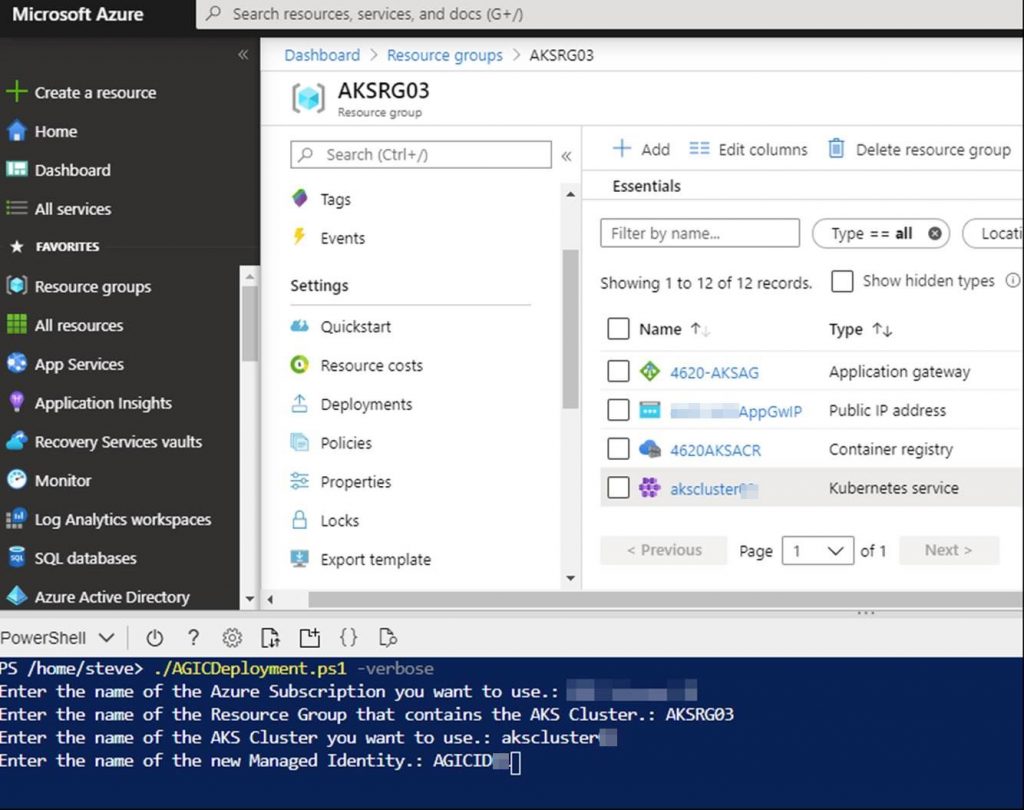

Now let’s take a look at running the script. It is recommended to upload to and run this script from Azure Cloud shell (PowerShell). Run:

./AGICDeployment.ps1 -verboseYou will be prompted for the following as shown in the screenshot:

Enter the name of the Azure Subscription you want to use.:

Enter the name of the Resource Group that contains the AKS Cluster.:

Enter the name of the AKS Cluster you want to use.:

Enter the name of the new Managed Identity.:

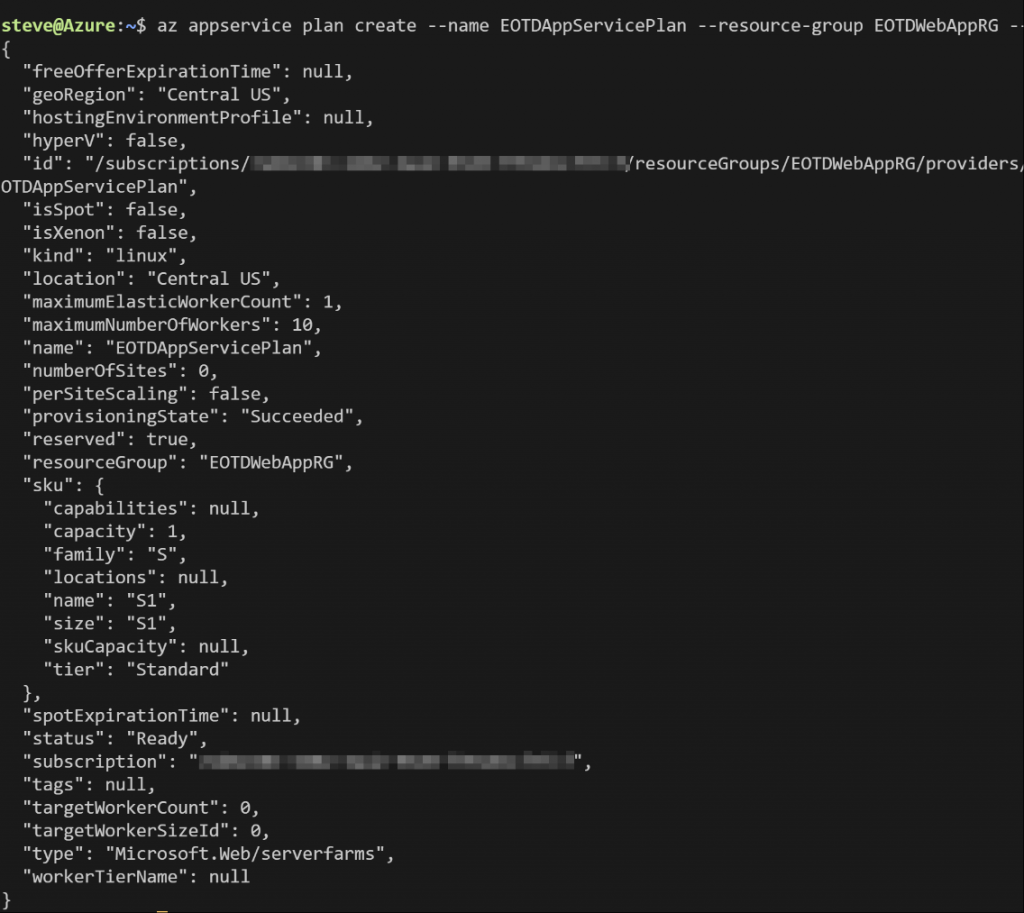

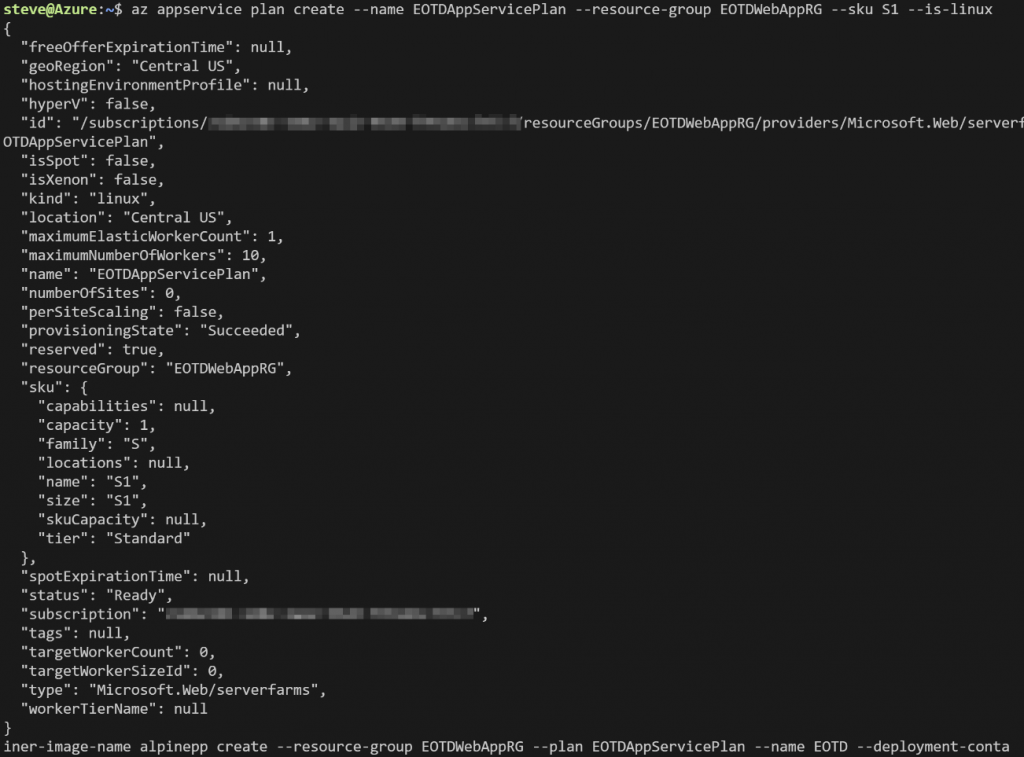

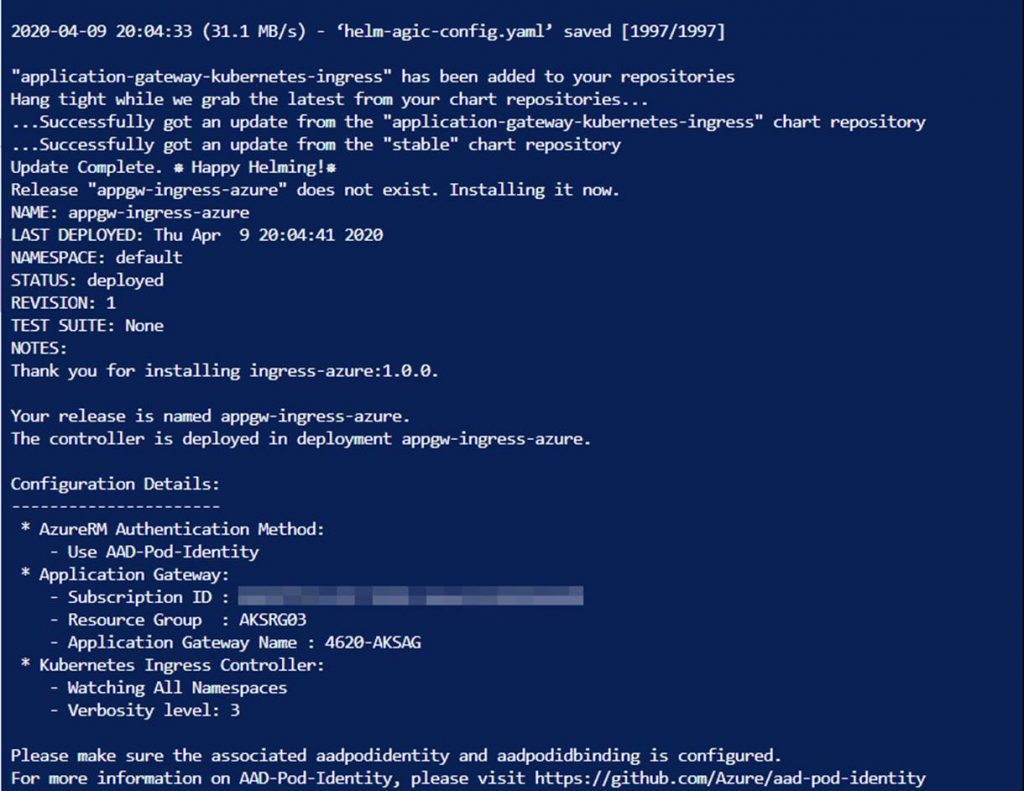

Here is a screenshot of what you will see while the script runs.

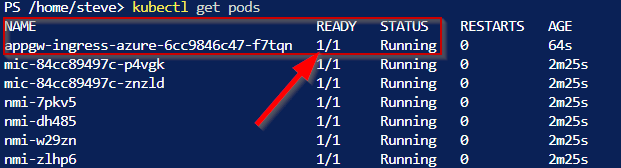

That’s it. You don’t have to do anything else except entering values at the beginning of running the script. To verify your new AGIC pod is running you can check a couple of things. First, run:

kubectl get pods

Note the name of my AGIC pod is appgw-ingress-azure-6cc9846c47-f7tqn. Your pod name will be different.

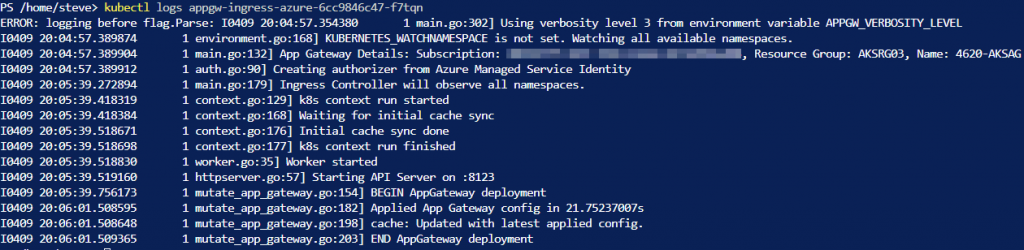

Now you can check the logs of the AGIC pod by running:

kubectl logs appgw-ingress-azure-6cc9846c47-f7tqn You should not have any errors but if you do they will show in the log. If everything ran fine the output log should look similar to:

After its all said and done you will have a running Application Gateway Ingress Controller that is connected to the Application Gateway and ready for new ingresses.

This script does not deploy any ingress into your AKS cluster. That will need to be done in addition to this script as you need. The following is an example YAML code for an ingress. You can use this to create an ingress for a pod running in your AKS cluster.

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: myapp

annotations:

kubernetes.io/ingress.class: azure/application-gateway

spec:

rules:

- http:

paths:

- path: /

backend:

serviceName: myapp

servicePort: 8080Thanks for reading and check back soon for more blogs on AKS and Azure.