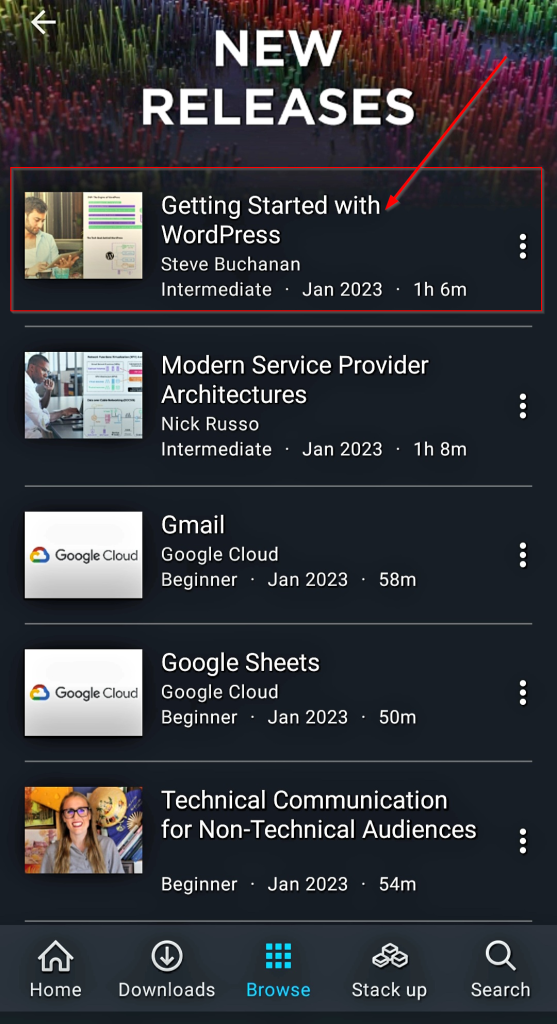

This week I published a second course on Pluralsight this year. This marks my 19th course! This course is titled “Getting Started with WordPress“. Startups, enterprises and more continue to adopt content management systems at a fast rate with WordPress owning a 60% market share. WordPress is the number one choice from startups to enterprises. It is being used for many uses from web apps, a marketing tool, e-commerce, or even a company’s main website.

I have been working with WordPress in various aspects for over sixteen years. I use WordPress for this blog, have used it for websites, hosted it for businesses, administered WordPress sites for customers, WordPress development for customers, and even managed the development of WordPress plugins. With all of my history with WordPress, I was excited when the opportunity came up to build a course about it.

This course is ideal for bloggers, entrepreneurs, Product Managers, Marketing managers, Marketing executives, Marketing consultants, Marketing employees, web developers, project managers, business analysts, web designers, graphic designers, UX/UI, designers, and anyone interested in content management systems specifically WordPress.

This course will take you from little to no knowledge of WordPress to a place where you can be confident enough to get started. Whether you want to create a personal blog, a business website, or an online store, WordPress is a skill you should have and this course has you covered.

In this course, Getting Started with WordPress, you’ll learn its many uses, features, tech stack, and you’ll also explore hosting it. Next, you’ll learn how to install it. Finally, you’ll discover its user interface and general configuration.

By the end of this course, you will have a better understanding of content management systems, & WordPress itself, its uses, features, & tech stack. As well as knowledge of how to get a domain name, hosting, and install WordPress along with a tour of its interface and general configuration.

Check out the “Getting Started with WordPress“ course here:

https://www.pluralsight.com/courses/wordpress-getting-started

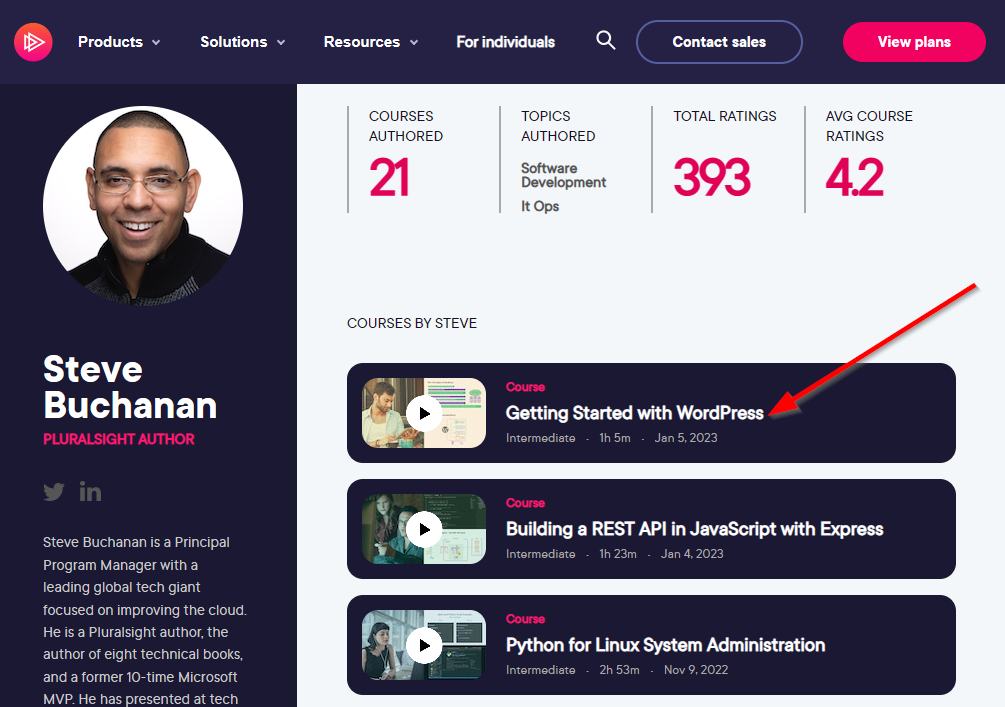

I hope you find value in this new Getting Started with WordPress course. Be sure to follow my profile on Pluralsight so you will be notified as I release new courses!

Here is the link to my Pluralsight profile to follow me: